Experts express caution over the growing use of automated technology and artificial intelligence for job and candidate screening and hiring processes. MACo tackled the issue at the 2022 Summer Conference.

Experts at the Equal Employment Opportunity Commission (EEOC) and the Department of Labor’s (DOL) Office of Federal Contract Compliance Programs (OFFCCP) are warning about the potential for automated technology, like artificial intelligence (AI), used in the workplace for hiring and human resource functions to accelerate discrimination.

Experts at the Equal Employment Opportunity Commission (EEOC) and the Department of Labor’s (DOL) Office of Federal Contract Compliance Programs (OFFCCP) are warning about the potential for automated technology, like artificial intelligence (AI), used in the workplace for hiring and human resource functions to accelerate discrimination.

Wilneida Negrón, Director of Policy and Research at worker organizing platform Coworker.org, recently explained the kinds of automatic technologies being used in the workplace, like video screening tools and AI that analyze things like “facial movements to make assessments about candidates; automated sourcing and recruitment platforms that use public data to make predictions about competencies and chatbots that screen potential applicants.”

The federal agencies hosted a September 13 virtual roundtable on the issue with external stakeholders to discuss “the civil rights implications of the use of automated technology systems, including artificial intelligence, in the recruitment and hiring of workers.” The virtual event was part of the agencies’ joint HIRE Initiative and the EEOC’s AI and Algorithmic Fairness Initiative.

The agencies issued a joint statement on the vent that detailed concerns and implications of AI and algorithmic technology in the workplace:

Participants identified numerous ways that employers use automated technologies to source and screen job applicants, such as machine learning algorithms that review resumes and video interviewing technology. They also explained how discrimination may occur based on protected characteristics, including race, sex, disability, and age when employers use hiring technologies. Potential barriers to equal employment opportunity include issues accessing technology due to the digital divide, job advertisements targeting specific groups, and programs analyzing incomplete datasets that under- or over-represent historically marginalized groups.

Participants also identified how automated technologies might promote equal employment opportunity such as “helping employers better understand applicant pools,” but still offered caution:

Also, participants provided some promising practices to reduce the potential for discrimination. Several speakers noted the importance of ensuring that employers understand how these sophisticated tools are being used to make decisions, address upfront any potential for selection bias, and the need to offer reasonable accommodation to applicants with disabilities.

“To be clear, I am not suggesting that automated hiring systems cannot be used consistently with [diversity, equity, inclusion and accessibility] initiatives,” said Charlotte Burrows, EEOC chair at the September 13 event. “But it’s important to understand the ways in which the assumptions included in the design of some programs that automate employment decisions can affect the diversity of candidates selected.”

A new report from Route Fifty delves even further into the policy debate and how “this technology isn’t necessarily discriminatory, but can be”:

Algorithmic screens could “increase the diversity of our talent pools … if they measure the skills and abilities needed to succeed,” said Jenny Yang, director of the OFCCP, which enforces equal employment opportunity laws among federal contractors. But using “proxies” like education level to screen out candidates can eliminate people who could actually be good at the job.

Digital advertising platforms can also allow employers to show job ads only to certain workers based on race, gender and age, said Peter Romer-Friedman, a principal at Gupta Wessler PLLC, who has worked on a lawsuit centering on Facebook’s practices in this area.

Importantly, additional guidance from the EEOC may be forthcoming:

The EEOC said that it would issue technical assistance on the use of AI in employment decisions when it launched its AI initiative last year. It issued guidance in May with the Justice Department focused on the impact on people with disabilities specifically.

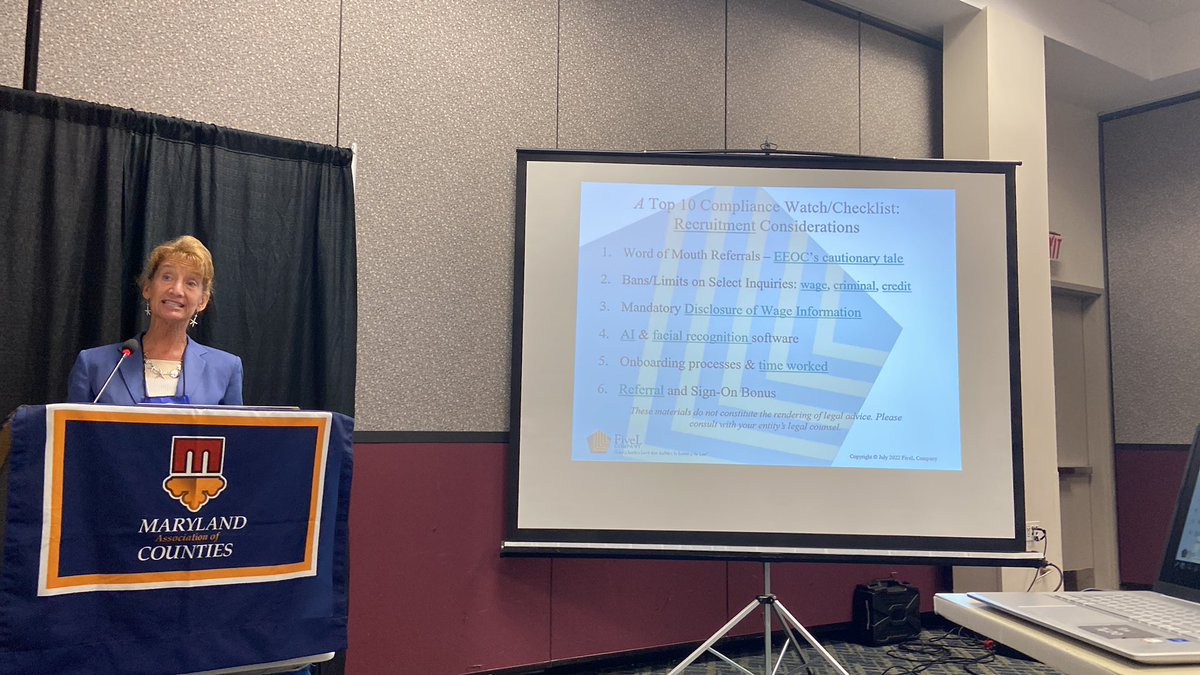

MACo tackled the issue at the 2022 Summer Conference

In the first part of a two-part series, at the 2022 MACo Summer Conference, experts and human resource professionals discussed best practices for hiring and retaining a talented workforce.

During the conference, participants learned about innovative hiring practices to bypass workforce roadblocks and to cultivate a new pool of diverse candidates — including using automated technology in candidate screening and hiring processes.

Christine Walters, Principal of FiveL Company, provided an oversight of legal and ethical considerations for local governments utilizing such tools and cautioned that counties consider the ethical and legal implications of AI and facial recognition software for Human Resources purposes.